0

Table of Contents

Measuring the performance of dedicated development teams – how do you measure team performance

We'll discuss dedicated teams performance metrics and teach you how to make informed decisions and improve the efficiency of your dedicated product team.

After an array of best practices for managing dedicated development teams, the next step is measuring performance.

If you’ve asked “how do you measure team performance,” this guide connects strategy with day-to-day execution so you can act with confidence.

Whenever we assemble dedicated teams for our clients, we know that the success and the quality of work we deliver depend heavily on the ability to effectively measure performance.

That means choosing clear team metrics and agreeing on how to evaluate team performance before work starts.

You need to identify strengths, address weaknesses, and optimize your team's output. This involves establishing clear goals, defining SMART objectives, and employing performance metrics such as cycle time, code stability, software reliability, and quality assurance.

We also cover how to track team performance across tools to measure team performance and how to measure team effectiveness in distributed environments.

We'll discuss all of these and teach you how to make informed decisions and improve the efficiency of your dedicated product team.

Let our dedicated team step in!

We will help you cover every aspect of your product development.

Estimate costWhat is a dedicated development team, and how do you measure team performance?

A dedicated development team is a group of specialized professionals, including developers, designers, testers, and project managers, who work exclusively on a specific project or client's needs.

Unlike traditional collaboration models, where individuals are brought together temporarily and can work on multiple projects simultaneously, dedicated teams are assembled with a long-term focus solely on one project, ensuring full-time commitment and a deep understanding of the client's requirements.

By providing dedicated resources, this model offers increased productivity, faster time-to-market, better control over project scope, and improved flexibility to adapt to your changing needs.

When measuring team performance in this model, align expectations early and document the team performance metrics you’ll review at each milestone.

Setting the right goals for your dedicated team – how do you measure team performance in practice?

Without the right goals, measuring the performance of dedicated development teams can quickly become futile, similar to navigating uncharted waters without a compass. Clear goals make evaluating team performance consistent and fair. Here’s what you should know about goals.

SMART goals

First of all, your goals should be SMART. This framework sets objectives that enable you to set specific targets, track progress, and align your efforts with the desired outcomes. Let's break down each component:

- Specific. Clear, well-defined goals that focus on a specific outcome should answer the questions of what needs to be accomplished, who is involved, and why it is important.

- Measurable. Include tangible (quantitative or qualitative) metrics or criteria to track progress and determine success. Pick team productivity metrics and at least one team metric tied to user-visible outcomes.

- Achievable. Goals should be realistic and attainable within the given resources and constraints, which means they should stretch the team's capabilities but remain pragmatic to maintain motivation and commitment.

- Relevant. Align your goals with your overall objectives and priorities.

- Time-bound. They should have a defined timeline or deadline for completion for accountability and a sense of urgency.

Objectives and key results (OKRs) for measuring team performance

The Objectives and Key Results (OKR) approach is another powerful goal-setting framework that begins with crafting clear and inspiring objectives, which serve as guiding lights, igniting motivation and focus. They provide direction, purpose, and a shared vision for the team.

To measure progress, key results are defined—specific and measurable indicators that track your journey towards those objectives. They quantify the desired outcomes and serve as benchmarks for success. Use OKRs to evaluate team performance every quarter and to guide team performance measurement without micromanaging tasks.

Regular check-ins and updates will keep you on track, allowing for adaptability and continuous improvement.

Performance metrics

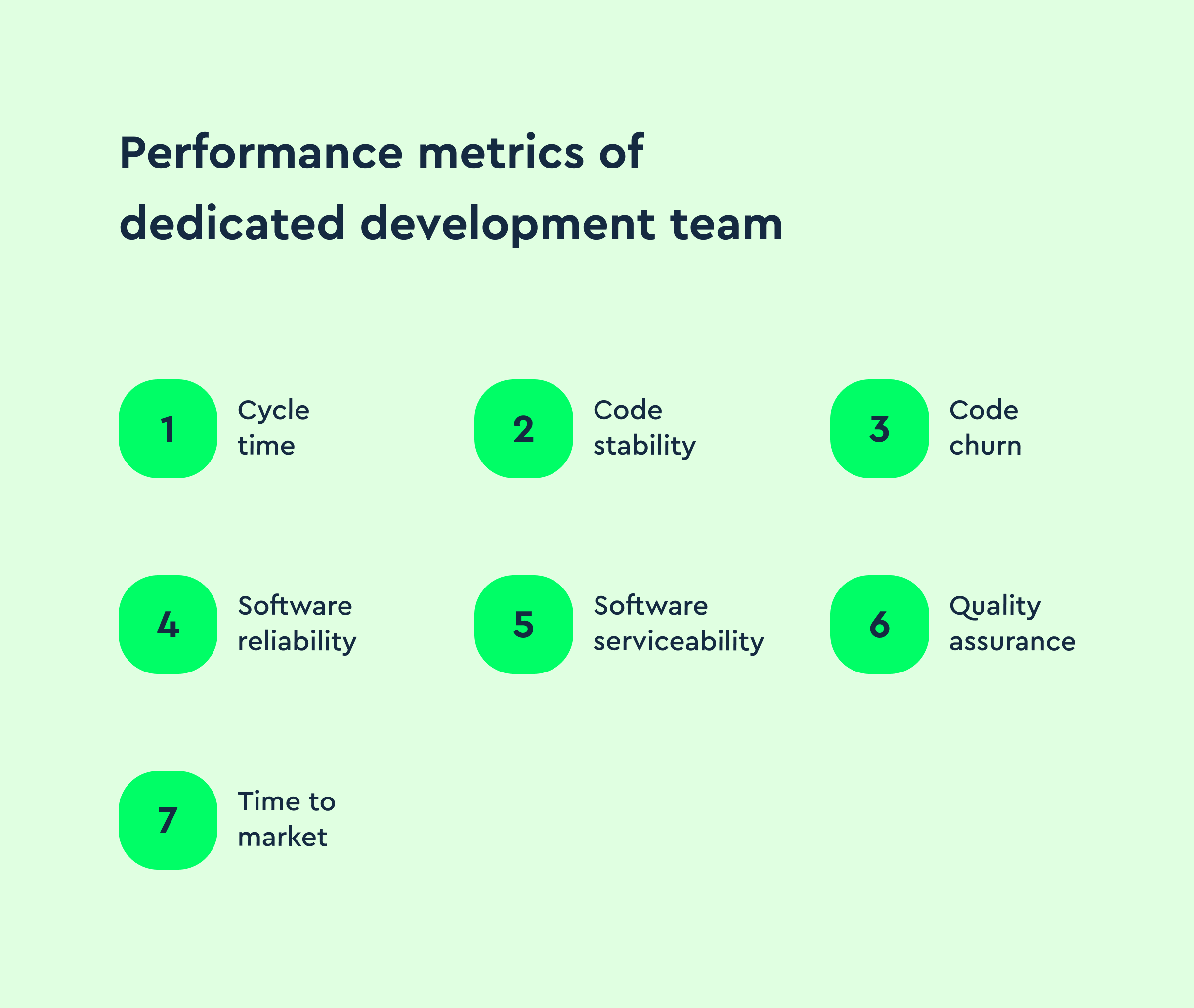

The following seven performance metrics will provide a hefty dose of valuable insights into the effectiveness and efficiency of your dedicated development teams.

Think of these as practical metrics to measure team performance and as a baseline for assessing team performance during delivery.

Cycle time

Cycle time refers to the duration of a task or feature to move through the development process, from initiation to completion. To measure it, track the start and end timestamps of each task or feature. Calculating the time difference between these timestamps provides the cycle time for that specific item.

Several tools can assist in measuring cycle time. Jira and Trello offer built-in features for tracking task durations. Moreover, Kanban boards or other team collaboration platforms can visually represent work progress, facilitating cycle time measurement.

Cycle time is a reliable answer when someone asks how to measure team performance without tracking hours.

Code stability

Code stability has to do with the robustness and reliability of the codebase. It measures the extent to which the code functions as intended and remains free from errors, bugs, and unexpected behavior.

Measuring this metric involves conducting thorough code reviews, running comprehensive unit tests, and utilizing static code analysis tools.

Code reviews help identify potential issues and promote adherence to coding standards, while unit tests verify the correctness of individual code components. Static code analysis tools, like SonarQube or ESLint, analyze the codebase for potential bugs, vulnerabilities, and maintainability concerns.

Error budgets and change failure rate turn code stability into actionable team success metrics.

Code churn

The rate of code changes or modifications within a development team's codebase over a specific period is called code churn. It measures the frequency and extent of code revisions, additions, and deletions.

Code churn is quite helpful in assessing code stability, identifying areas of high activity or potential instability, and understanding the impact of changes on overall development efforts.

Measuring code churn involves tracking the number of lines added, modified, or removed within the codebase. Version control systems like Git provide valuable insights into code churn through commit history analysis.

Analyzing trends and patterns in code churn helps your team better understand the codebase's evolution, identify potential risks, and prioritize areas for code refactoring or optimization.

You can measure code churn using code analysis platforms like SonarQube, which provide insights into code changes and churn metrics. Additionally, integrated development environments (IDEs) like Visual Studio Code or IntelliJ IDEA often offer plugins or extensions that facilitate code churn analysis.

Track hotspots by component to monitor team performance without encouraging pointless rewrites.

Software reliability

This next metric means the ability of a software application or system to perform its intended functions consistently and accurately, without failures or errors, under specified conditions and for a specified period. It is critical to software quality, as reliable software minimizes the risk of system crashes, data loss, and disruptions.

Measuring software reliability involves conducting four types of software testing. Functional testing ensures the software meets its specified requirements and performs as expected. Load testing and stress testing assess how the software performs under varying workloads and extreme conditions. These types of tests can be automated to improve efficiency and accuracy, reducing the risk of human error during repetitive processes. By automating functional, load, and stress testing, teams can consistently validate performance across different scenarios. The types of automated testing involved in these processes ensure the software remains reliable under both normal and extreme operating conditions, allowing for faster detection of potential issues. Performance testing measures the system's responsiveness, resource usage, and stability under different scenarios.

Useful testing frameworks for measuring software reliability include Selenium for functional testing and Apache JMeter for load testing and stress testing. Error monitoring and logging tools like Sentry and Logstash also help identify and track errors.

Add service-level objectives to make measuring team effectiveness concrete for reliability work.

Software serviceability

Software serviceability refers to the ease with which software can be diagnosed, maintained, and repaired when issues arise. It encompasses the ability to identify and resolve software defects, apply patches or updates, and provide efficient technical support.

To measure this metric, consider factors such as the responsiveness of technical support, the efficiency of bug tracking and resolution processes, and the clarity and accessibility of documentation. Metrics like mean time to repair (MTTR) and customer satisfaction ratings can provide more insights into serviceability.

Valuable tools for measuring and enhancing software serviceability include customer support platforms like Zendesk or Freshdesk, bug tracking systems like Jira or Bugzilla, and knowledge base software like Confluence.

If you need to show how to measure the success of a team that owns a platform, trend MTTR, incident count, and post-incident action follow-through.

Quality assurance

Quality assurance (QA) ensures that software products or applications meet defined quality standards and user expectations and involves preventing defects, identifying and resolving issues, and verifying that the software functions as intended. Testing methodologies include functional testing, regression testing, performance testing, and usability testing.

To measure QA:

- Define clear quality metrics and standards based on user requirements and industry best practices.

- Conduct thorough testing at each stage of the software development lifecycle, from unit testing to system testing and acceptance testing.

- Utilize test management tools like TestRail or Zephyr to organize and track test cases, results, and defects.

- Implement continuous integration and continuous testing practices to identify and fix issues early.

QA dashboards are useful when stakeholders ask how to measure team success during rapid releases.

Time to market

Time to market refers to the elapsed time between initiating a software development project and releasing the final product to the market or end-users.

It measures the speed and efficiency of a dedicated development team to deliver a software solution or feature to meet customer demands and gain a competitive advantage.

Tools for measuring and improving time to market include Jira and Git for version control and code collaboration and continuous integration and deployment (CI/CD) tools like Jenkins or CircleCI for automating the software release process.

Add GitHub Actions to the list for 2025 – the service is now a common choice for CI/CD in dedicated teams.

How to measure team performance day to day

Measuring team performance in a development context blends process data and outcomes. To evaluate team performance, select a small set of team performance metrics and review them weekly. Start with delivery signals like throughput and lead time. Add quality signals like escaped defects. Include customer signals like adoption and task success. When you need to measure team performance online across distributed teams, instrument your work system so you’re measuring work system performance with traceable, transparent data. This approach answers the practical question: how do you measure team performance without creating distracting bureaucracy?

Tools to measure team performance

Use your delivery platform as the system of record. Jira or Linear manage planning, GitHub or GitLab track code, and your observability stack supports monitoring team performance. For automation, use CI and deployment tools. These tools help with how to monitor team performance during incidents and with how to measure teamwork on cross-functional projects. Dashboards simplify team performance measurement for stakeholders who need a single view.

Evaluating team dynamics

To evaluate team dynamics, it is essential to consider the following factors.

Cost efficiency

Cost efficiency is a factor that evaluates the financial effectiveness of a dedicated development team. It involves maximizing output and delivering high-quality results while minimizing costs and optimizing resource allocation. Cost efficiency directly impacts dedicated teams by influencing project profitability, resource utilization, and overall budget management.

To assess cost efficiency, consider the following aspects:

- Resource utilization. Analyze how effectively your team members are assigned to tasks and projects, ensuring their skills and expertise align with project requirements.

- Project budget and expenses. Regularly review and track project expenditures to identify areas of potential cost savings or cost overruns.

- Process improvements. Continuously identify and implement process optimizations to streamline workflows, reduce waste, and increase efficiency.

When measuring the optimization of team dynamics, review handoffs, approval waits, and knowledge silos to keep collaboration healthy.

Productivity

Productivity is a critical factor that measures the efficiency and output of a dedicated development team. It refers to the team's ability to deliver work and achieve desired outcomes within a given timeframe. High productivity enables teams to accomplish more, meet project deadlines, and provide value to clients and stakeholders.

To assess productivity, track completed tasks, milestone achievements, and adherence to project schedules. Additionally, consider feedback from stakeholders, client satisfaction ratings, and team retrospectives to gain insights into productivity levels and identify areas for improvement.

Treat these signals as team success metrics and review trends over time rather than chasing daily variance.

Leverage automation to measure performance

Did you know that automation can reduce human error and save you valuable time, allowing your teams to focus on analyzing results and making data-driven decisions? It will also enable your teams to gather accurate and consistent data, streamline the measurement process, and gain real-time insights into performance metrics.

In addition to standard automation tools, incorporating digital employee experience solutions can provide deeper visibility into how system performance and usability affect team efficiency. These platforms support proactive IT management by tracking issues before they impact productivity, helping ensure a smoother digital workflow.

After you're done with your relevant performance metrics, choose tools that can automate data collection and analysis for those identified metrics. For example, Jenkins, CircleCI, or Travis CI can automate build and deployment processes, while SonarQube or CodeClimate can automatically assess code quality and generate reports.

The next step is integrating the selected tools into your development and testing workflows. Configure them to collect relevant data and generate performance reports regularly or upon specific triggers, such as code commits or deployment events.

Finally, review the automated performance reports and analyze the data to gain insights into your team's performance. Look for trends, patterns, and areas that require improvement or optimization.

Automation also clarifies how to measure success of a team by linking shipped changes to user outcomes.

Team performance measuring guidance you can reuse

When someone asks how to evaluate team performance, show the scoreboard you use to monitor team performance and explain why each team metric matters.

- During planning, document strategies to monitor team performance and the metrics to measure team performance for the next increment.

- For a team new to continuous delivery, focus on how to measure the success of a team by tracking lead time and defect rate across two releases.

- If collaboration is the concern, discuss how to measure teamwork using review turnaround, cross-pairing hours, and shared ownership of modules.

- When you’re evaluating team performance after an incident, capture how to measure team effectiveness with MTTR, action follow-through, and customer impact.

- For quarterly reporting, outline how to measure team success with a small set of team performance metrics that map to business results.

- If your environment is complex, note that measuring work system performance reveals bottlenecks that individual stats miss.

- For transparency, publish a short note on how to track team performance and which tools to measure team performance you rely on.

FAQ – How do you measure team performance (quick answers)

Q: What are good metrics to measure team performance when shipping frequently?

A: Use lead time, deployment frequency, change failure rate, and time to restore. Pair them with outcome metrics so you can evaluate team performance beyond volume.

Q: How to measure team effectiveness without counting hours?

A: Trend reliability targets met, user tasks completed, and quality gates passed. These reflect measuring team effectiveness with outcomes rather than time spent.

Q: What should we watch in a hybrid setup?

A: Keep measuring team performance online with shared dashboards and async updates. This supports monitoring team performance across time zones.

Q: How to monitor team performance during high-risk periods?

A: Set daily check-ins, confirm priorities, and keep incident response ready. These strategies to monitor team performance reduce surprises.

Q: How to measure the success of a team that owns a platform?

A: Track MTTR, incident count, and adoption of platform capabilities. That’s how to measure the success of a team that enables others.

Q: What’s the simplest way to measure team performance for stakeholders?

A: Share a one-page view with team performance measurement, including delivery, quality, and outcome sections. Keep it stable for comparison.

Conclusion

We have established now that measuring the performance of dedicated development teams is crucial for achieving project success and delivering high-quality results.

A bit of a recap. So, setting the right goals helps you establish clear objectives, track progress, and drive performance improvement. A few crucial performance metrics will provide insights into your team's efficiency and product quality.

Remember to also assess cost efficiency and productivity to optimize collaboration and resource utilization. A finishing touch is leveraging automation tools to streamline the measurement process, ensuring accurate data collection and analysis.

Our Merge crew understands the importance of performance measurement and offers dedicated product teams to meet your specific project requirements. With our skilled professionals and collaborative approach, we strive to deliver exceptional results while ensuring transparency and efficiency.

2025 note – tooling update: GitHub Actions is now widely used alongside Jenkins and CircleCI for CI/CD in dedicated teams.